- Privacy-by-design: Build security measures like encryption and data minimization directly into AI systems from day one.

- Rising regulations: By 2026, half of the world’s governments will require AI-specific privacy compliance. Key laws include GDPR, CCPA, and the EU AI Act.

- Big risks: 38% of employees admit to sharing sensitive data, and “shadow AI” tools without oversight create vulnerabilities.

- NIST Privacy Framework 1.1: Released in April 2025, this framework helps manage AI privacy risks effectively.

Privacy Laws That Affect AI Compliance

The rules surrounding AI privacy are becoming more intricate, creating challenges for businesses to stay compliant. For companies using AI-driven communication tools, understanding these regulations is essential. Falling short can lead to heavy fines and disruptions in operations.US Privacy Laws: CCPA, CDPA, and Others

In the United States, privacy regulations vary by state, forming a patchwork of laws that businesses must navigate. The California Consumer Privacy Act (CCPA) and Virginia’s Consumer Data Protection Act (CDPA) are two of the most prominent, but they are just part of the broader regulatory picture. As of January 2025, several states, including Delaware and Iowa, have introduced new privacy laws with stricter data handling requirements. Each law has its own unique provisions, meaning businesses need to adapt their practices to meet these varying standards. The CCPA and CDPA emphasize consumer rights and transparency, while newer laws require explicit consent for data use and the ability to correct personal data. Additionally, organizations must provide clear notices, explanations, and appeal processes for decisions made by high-risk AI systems. AI tools, especially those involved in processing communications or generating summaries, must ensure transparency in their decision-making. Companies are also required to secure explicit consent for handling sensitive data and implement strong data protection measures. The workload has intensified, with a significant rise in Data Subject Requests (DSRs), putting pressure on businesses to handle these requests quickly and efficiently.Global Standards: GDPR and the EU AI Act

European regulations, like the General Data Protection Regulation (GDPR) and the EU AI Act, set the benchmark for AI privacy compliance worldwide. U.S. companies operating internationally must adhere to these strict standards or face severe financial penalties. The GDPR lays out essential requirements for AI systems. For example, Article 25 focuses on “Data protection by design and by default”, while Article 32 mandates robust “Security of processing.” These rules require organizations to embed privacy safeguards directly into their AI systems. The EU AI Act introduces a risk-based framework, categorizing AI tools based on their potential impact. High-risk systems face tight controls, including requirements for transparency, human oversight, and detailed documentation. Communication tools that handle personal data must comply with GDPR while also meeting additional AI-specific obligations under the EU AI Act. For instance, companies must perform Data Protection Impact Assessments (DPIA) as outlined in GDPR Article 35, especially when deploying AI tools that process significant amounts of personal data or make automated decisions impacting individuals. The financial stakes for non-compliance are steep, with enforcement actions resulting in hefty fines.NIST Privacy Framework and AI Risk Management

NIST emphasizes that managing privacy risks properly “can make AI systems more trustworthy and support responsible AI practices.”Rather than replacing tools like Data Protection Impact Assessments, PF 1.1 complements them by focusing on privacy risks unique to AI systems, including large language models and automated decision-making tools. For companies deploying AI communication tools, NIST recommends adopting a risk management strategy to identify, assess, and mitigate privacy concerns. This approach helps businesses balance the productivity gains of AI with strong privacy safeguards, ensuring compliance without sacrificing efficiency. To keep up with the fast-changing regulatory environment, companies need flexible frameworks and agile processes that can adapt to new rules across different jurisdictions. Compliance is no longer a static goal but an ongoing effort, requiring constant monitoring and updates. This proactive strategy lays the groundwork for tackling the specific privacy risks tied to AI-driven tools.

Main Privacy Risks in AI-Driven Tools

As AI adoption accelerates, enterprises face growing challenges in addressing the privacy risks inherent in AI design. The rapid integration of AI platforms often outpaces the implementation of robust security measures, leaving organizations vulnerable. To fully harness the benefits of AI without compromising data protection, these risks must be carefully managed. The urgency is clear. Gartner reports that 85% of organizations are already using AI services. This widespread adoption has fueled concerns about AI-enabled cyberattacks and misinformation, which are expected to rank among the most pressing risks in 2024. Coupled with evolving compliance demands, these trends have contributed to a rise in data breaches and privacy incidents.Data Exposure and Unauthorized Access

AI systems create new vulnerabilities for data exposure, often surpassing the capabilities of traditional security frameworks. Unlike conventional software, which operates with predictable data flows, AI tools frequently process unstructured data in complex ways, making them harder to monitor effectively. One major issue stems from misconfigured access controls and insecure data pipelines. AI systems often pull data from multiple sources to generate insights, summaries, or transcriptions. Each connection point presents a potential risk for unauthorized access. This is particularly concerning for AI-powered communication tools that handle sensitive information, such as confidential conversations, meeting recordings, or private documents. Data breaches involving AI systems can have especially severe consequences. Many platforms process personally identifiable information (PII) without users being fully aware. For example, an AI tool transcribing a meeting might inadvertently process sensitive data, such as employee Social Security numbers or customer payment details. If proper data handling protocols are not in place, this information could be exposed or stored insecurely, leading to regulatory penalties, reputational harm, and a loss of stakeholder trust. Recent cases of real-time location data misuse on social media platforms highlight how AI-driven features can unintentionally expose sensitive information, triggering legal scrutiny and regulatory action. But the risks don’t stop at data breaches, AI bias introduces another layer of privacy concerns.AI Bias and Privacy Problems

AI bias poses a unique privacy risk because its effects can be subtle yet far-reaching. Biased algorithms can make flawed decisions about data processing, access controls, or content filtering, leading to privacy violations that disproportionately impact certain groups. These issues often go unnoticed, making them particularly challenging to address. The privacy implications of AI bias can manifest in unexpected ways. For instance, an AI-powered communication tool might flag messages from specific demographic groups for extra scrutiny. This could unintentionally expose sensitive details, such as health conditions, financial circumstances, or personal relationships, to administrators who otherwise wouldn’t have access. Such violations can persist for extended periods before being detected, causing harm and exposing organizations to legal liabilities. Privacy laws increasingly demand fair and transparent data processing. When AI systems exhibit bias, they undermine these principles and create compliance challenges. This underscores the importance of ongoing monitoring to identify and mitigate bias-related issues. The risks extend even further when it comes to training AI models, where sensitive data is often at stake.Privacy Risks When Training AI Models

Training AI models presents some of the most intricate privacy challenges, as it typically involves vast datasets that may contain sensitive information. Once personal data is incorporated into an AI model’s training process, removing or regulating its influence becomes nearly impossible. Organizations face significant risks when training AI tools on sensitive datasets. Even when data is anonymized, AI models can sometimes infer or reconstruct personal details, inadvertently violating privacy regulations. Moreover, AI systems may memorize specific data points from their training sets. This means that sensitive information used during training could be extracted through carefully crafted queries, posing a serious privacy threat. For communication platforms, this risk extends to proprietary business information, personal conversations, or confidential data used to improve the AI’s language processing capabilities. Another major challenge arises from cross-border data transfers during training. Many AI models rely on cloud-based resources that span multiple jurisdictions, potentially transferring personal data internationally without adequate safeguards. This can conflict with regulations like the GDPR, which impose strict rules on cross-border data movement and data localization. Training is rarely a one-time process; models are often updated with new data, making it difficult to enforce data retention policies or comply with requests to delete personal information. These ongoing updates further complicate privacy management, leaving organizations with limited control over how sensitive data is handled over time.Best Practices for Enterprise AI Privacy

To address the risks associated with AI privacy, enterprises must adopt a layered approach that combines technical safeguards, organizational policies, and proactive risk management. These measures are no longer optional, they’re essential for maintaining trust and ensuring success in today’s data-driven landscape.Technical Protection: Encryption and Data Masking

Encryption forms the backbone of AI privacy protection. Sensitive data must be encrypted both at rest and in transit, adhering to AES-256 standards. Unlike traditional software, where data flows are predictable, AI systems handle data in complex ways. This requires robust encryption strategies, managed through hardware security modules, with protocols updated regularly and subjected to penetration testing. Field-level encryption offers an extra layer of security by targeting specific data points – like Social Security numbers or financial details, rather than encrypting entire databases. For example, when AI tools process meeting transcripts or document summaries, this method ensures sensitive information remains protected, even in the event of unauthorized access. Data masking is another essential tool, replacing actual sensitive information with fictional data during model training and testing. This approach allows AI systems to learn from data without exposing real personal details. For instance, real names might be replaced with placeholders like “John Smith” or “Jane Doe”, preserving data structure while safeguarding privacy. Tokenization complements data masking by substituting sensitive identifiers with randomized tokens. For example, a customer’s ID might be replaced with a token that maintains database relationships without exposing personal information. This is particularly useful for AI applications that track user behavior without storing identifiable details. While these technical measures secure data at the system level, they must be supported by clear policies and strict access controls to ensure responsible handling of information.Company Policies: Access Controls and Audits

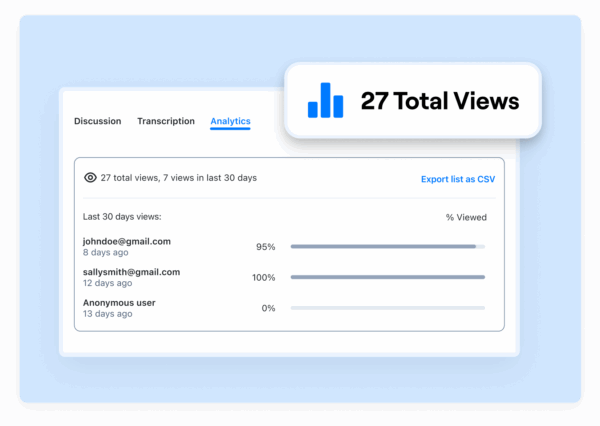

Strong organizational policies are critical for upholding privacy standards in AI implementations. Role-based access controls (RBAC) ensure that employees only access the data necessary for their specific roles, minimizing exposure to sensitive information. Multi-factor authentication should be mandatory for accessing AI systems, with Single Sign-On (SSO) simplifying user management while maintaining security across multiple applications. This centralized approach not only streamlines authentication but also provides a clear view of system access. Regular access reviews are vital. Automated tools can monitor access patterns and flag anomalies, while manual reviews dive deeper into potential risks. These reviews evaluate compliance with regulations and test the effectiveness of technical safeguards. Audit trails are equally important, documenting every interaction with sensitive data. These logs track user identities, timestamps, accessed data, and actions performed. For instance, they can reveal who viewed a meeting recording, accessed a transcript, or shared a sensitive document. This accountability enables swift responses to potential breaches. Administrative controls further enhance privacy management by centralizing user access and data handling policies. These controls allow administrators to define who can view, edit, or share AI-generated content. Features like automatic redaction and blurring can strip sensitive details from shared content, reducing the risk of unauthorized exposure. To complement these policies, regular assessments help identify new risks and ensure compliance.

Multi-factor authentication should be mandatory for accessing AI systems, with Single Sign-On (SSO) simplifying user management while maintaining security across multiple applications. This centralized approach not only streamlines authentication but also provides a clear view of system access. Regular access reviews are vital. Automated tools can monitor access patterns and flag anomalies, while manual reviews dive deeper into potential risks. These reviews evaluate compliance with regulations and test the effectiveness of technical safeguards. Audit trails are equally important, documenting every interaction with sensitive data. These logs track user identities, timestamps, accessed data, and actions performed. For instance, they can reveal who viewed a meeting recording, accessed a transcript, or shared a sensitive document. This accountability enables swift responses to potential breaches. Administrative controls further enhance privacy management by centralizing user access and data handling policies. These controls allow administrators to define who can view, edit, or share AI-generated content. Features like automatic redaction and blurring can strip sensitive details from shared content, reducing the risk of unauthorized exposure. To complement these policies, regular assessments help identify new risks and ensure compliance. Data Protection Impact Assessments (DPIA)

Data Protection Impact Assessments (DPIAs) provide a proactive way to manage privacy risks. Under GDPR Article 35(3), DPIAs are mandatory for AI systems that process large-scale personal data. Instead of reacting to privacy breaches, these assessments help organizations identify and address risks early in the development process. The DPIA process involves mapping all AI systems to understand data flows, assessing whether data usage is necessary and proportionate, and collaborating with data protection officers, IT security teams, legal experts, and even affected individuals. This collaborative approach ensures that potential risks to individuals’ privacy are thoroughly evaluated. For example, a financial services company planning to deploy an AI-powered customer support chatbot conducted a DPIA. They discovered that the training data included sensitive customer transaction details. As a result, they implemented data minimization, enhanced encryption, and stricter access controls, reducing the likelihood of data leaks and ensuring compliance with privacy regulations. Ongoing documentation and review are crucial for keeping DPIAs relevant as AI systems evolve. Whether models are updated, new features are added, or data sources change, regular updates to DPIAs help organizations stay ahead of emerging challenges and demonstrate a commitment to responsible data use. The NIST Privacy Framework 1.1 (2025) offers updated guidance for managing privacy risks specific to AI, such as accidental exposure of personal information, statistical bias, and misuse of likeness rights. Integrating these frameworks into enterprise risk management practices ensures comprehensive coverage of both traditional and AI-specific concerns. Organizations that view privacy compliance as a strategic advantage often build stronger consumer trust and stand out in competitive markets. By taking a comprehensive approach to privacy, enterprises lay a solid foundation for integrating advanced privacy features into their communication tools.Privacy Features in Zight for Enterprises

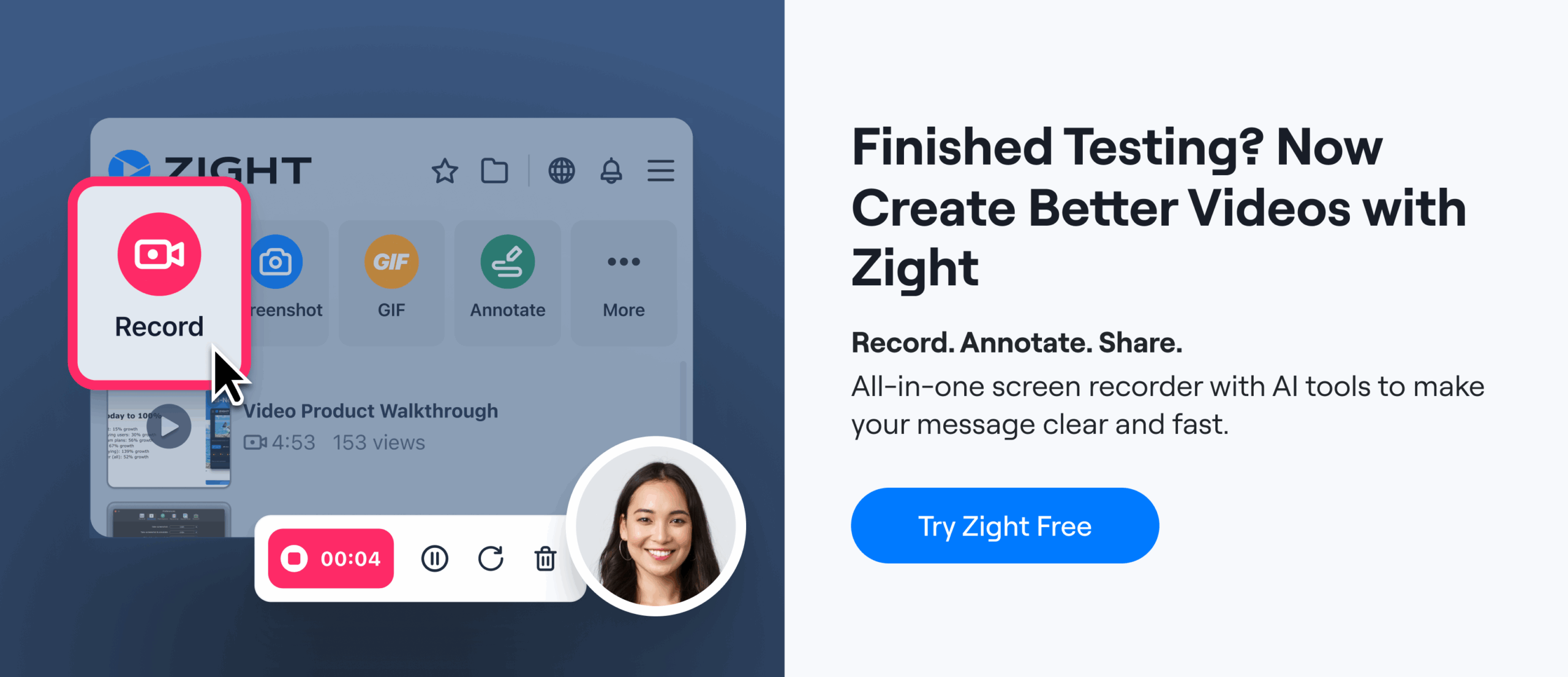

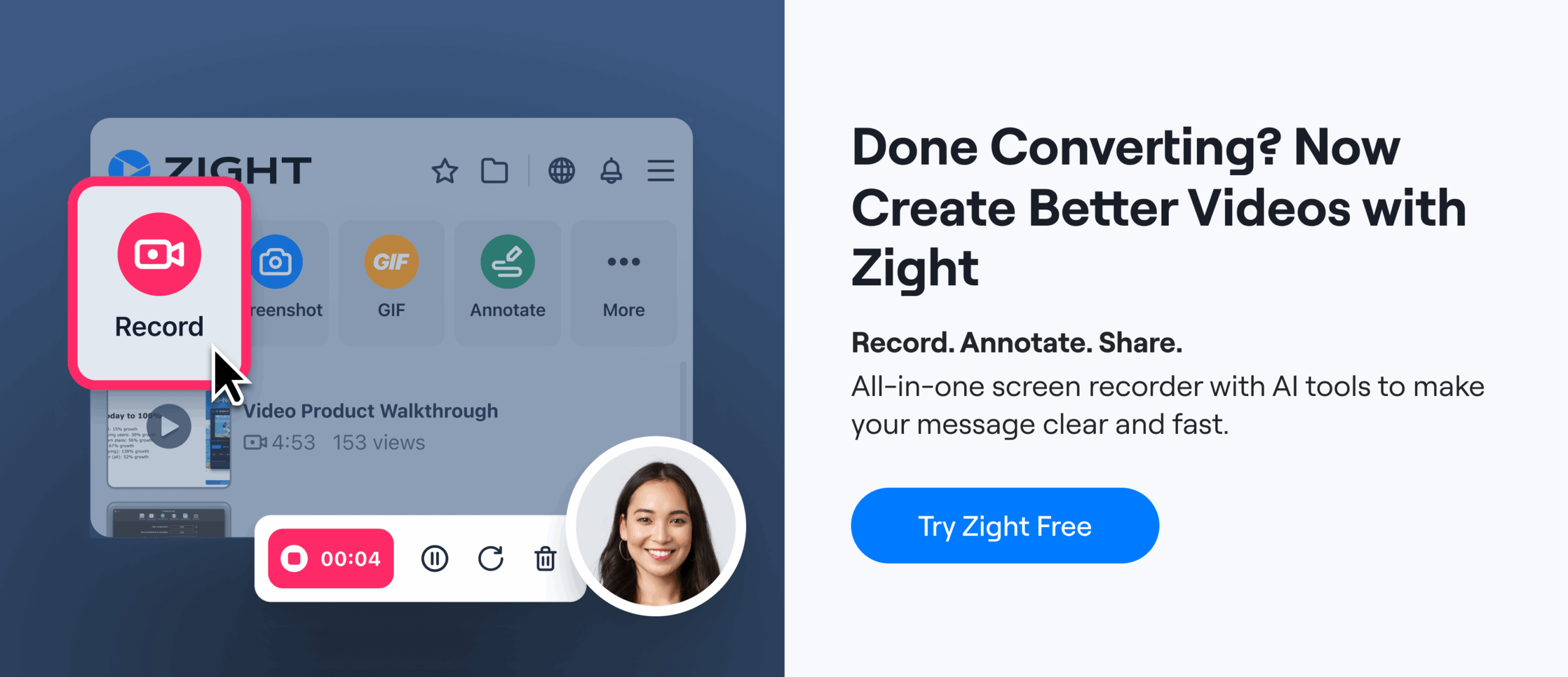

Zight incorporates privacy-by-design principles into its AI-driven visual communication platform, ensuring sensitive data is protected while offering advanced tools tailored for enterprise needs. Addressing the privacy challenges discussed earlier, Zight provides solutions that cater specifically to businesses.AI-Powered Tools with Privacy at the Core

Zight’s AI tools, designed for transcription in over 50 languages, video summaries, and translations, employ end-to-end encryption and strict data minimization practices to safeguard sensitive meeting content. The platform ensures that AI-generated summaries and automatic titles are processed in secure environments, retaining only the data essential for functionality. This approach minimizes exposure risks during data handling.“The new AI features in Zight are not invasive at all and yet provide a ton of value. That’s a very well thought out implementation.” – Kyle Eberle, Enterprise Architect, BloomerangZight also offers blur and redact tools for visual content. Users can instantly pixelate or remove confidential information from screenshots and videos before sharing. This helps prevent accidental exposure of sensitive data.

“A huge plus I haven’t found in other apps is that you can ‘pixel’ all the sensitive information perhaps you don’t want to share.” – Luisa Zapata García, Strategic Customer Success Manager, Globalization PartnersThis feature is especially useful for enterprises working with client data, financial records, or proprietary content. It allows videos to be transformed into step-by-step guides, bug reports, or standard operating procedures while maintaining strong privacy protections.

Compliance with Leading Privacy Standards

Zight aligns with major privacy regulations, including GDPR, CCPA, and emerging state laws. The platform employs clear user consent mechanisms and automated tools for handling Data Subject Requests, ensuring compliance at every step. Zight stays ahead of changes in privacy laws, regularly updating its compliance measures to meet new requirements, such as those introduced in Delaware, Iowa, and New Jersey. Transparent data processing practices and clear privacy notices help enterprises demonstrate compliance during audits. By adhering to data minimization principles, Zight collects and processes only the information necessary for its services. Customizable data retention settings further reduce risk while supporting audit standards.Enterprise-Grade Security Measures

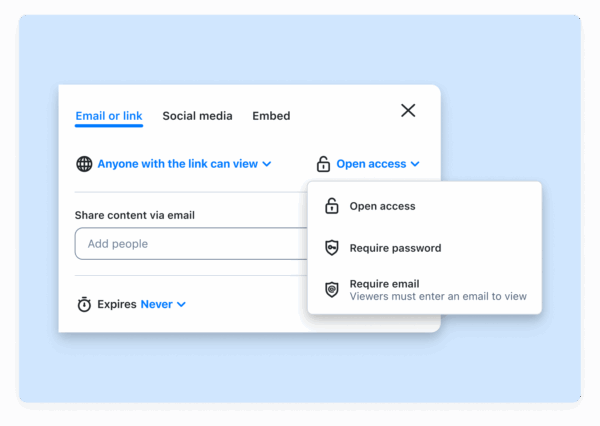

Zight provides a layered security framework, including single sign-on (SSO), granular administrative controls, and real-time monitoring. These features simplify user management and enhance protection, extending across integrations with platforms like Slack, Microsoft Teams, and Jira.“Easy to use, high quality, customizable sharing settings/permissions… love it!” – Ellie Peterson, Senior Product Manager: Partner Innovations, QualtricsAdvanced analytics and monitoring tools give enterprises visibility into access patterns and usage behaviors, ensuring accountability and supporting audit requirements. Flexible deployment options, such as enterprise-grade cloud storage and self-hosting, allow organizations to meet specific regulatory or internal security needs.

Custom data retention policies, paired with automated deletion features, ensure that data is not stored beyond its useful lifecycle. For example, a US-based technology company used Zight’s SSO, custom retention settings, and AI-powered transcription tools to comply with both CCPA and GDPR, reducing compliance risks while improving audit readiness. Zight’s dedicated compliance team actively monitors changes in global and US privacy laws, ensuring the platform remains aligned with the latest legal and industry standards.

Custom data retention policies, paired with automated deletion features, ensure that data is not stored beyond its useful lifecycle. For example, a US-based technology company used Zight’s SSO, custom retention settings, and AI-powered transcription tools to comply with both CCPA and GDPR, reducing compliance risks while improving audit readiness. Zight’s dedicated compliance team actively monitors changes in global and US privacy laws, ensuring the platform remains aligned with the latest legal and industry standards. Future Changes in AI Privacy Standards

The fast-evolving landscape of AI privacy is pushing businesses to stay ahead of new regulations to avoid hefty penalties and ensure compliance.Expected Changes in US and Global Laws

AI privacy regulations are becoming more intricate, especially in the United States. Starting in 2025, eight new state privacy laws will come into effect, including the Delaware Personal Data Privacy Act, Iowa Consumer Data Protection Act, Nebraska Data Privacy Act, New Hampshire Consumer Data Protection Act, New Jersey Data Privacy Act, Tennessee Information Protection Act, Minnesota Consumer Data Privacy Act, and Maryland Online Data Privacy Act. Each of these laws introduces distinct requirements for handling AI and data. For businesses operating across multiple states, this means navigating various consent protocols, data retention rules, and disclosure obligations. Tailored compliance strategies will be essential to manage this regulatory maze. On the federal level, the US Privacy Act Modernization Act of 2025 is under discussion. If passed, it could enhance individual rights regarding government data collection. While this might bring some standardization, it could also introduce additional compliance layers, particularly for companies dealing with government contracts or sensitive data. Internationally, the European Union is moving forward with its “ProtectEU” initiative, which aims to allow lawful access to encrypted data for law enforcement by 2030. This raises major privacy and security challenges, especially for companies relying on encrypted communication tools, potentially forcing them to rethink their encryption practices. Cross-border data transfers are also under greater scrutiny, complicating compliance for AI systems processing data across multiple jurisdictions. These developments highlight the growing need for privacy solutions that can scale alongside organizational growth.Need for Scalable Privacy Solutions

The rise in Data Subject Requests (DSRs) is adding significant operational strain on businesses. As consumers become more aware of their rights to access, correct, or delete personal data, organizations are seeing a surge in requests that must be addressed within strict deadlines. Handling these requests manually is no longer practical. Companies that fail to meet these obligations risk legal consequences and reputational harm. The increasing use of generative AI and the flow of unstructured data between systems only adds to the complexity of these requests. To address this, automated DSR management platforms are becoming a necessity. These platforms integrate with AI and data systems, enabling real-time processing of requests for access, correction, deletion, and opt-out. AI-powered tools that categorize and route requests based on data type and jurisdiction help reduce manual effort while ensuring compliance. As regulations evolve, businesses must adopt scalable solutions that bridge AI governance and privacy compliance. This has driven demand for platforms capable of automating workflows, maintaining audit trails, and managing compliance reporting across various privacy frameworks.Staying Updated with AI Privacy Standards

Keeping pace with changing laws and adopting scalable solutions is just the beginning. Organizations must also continuously refine their privacy practices. The NIST Privacy Framework 1.1, released in April 2025, provides guidance tailored to managing privacy risks unique to AI systems. This includes addressing issues like inadvertent exposure of personal information during AI training, biases in AI-assisted decisions, and misuse of AI for activities like creating deepfakes. Businesses should establish dedicated privacy teams to monitor regulatory changes and actively participate in industry forums. Subscribing to updates from organizations like NIST and the EU, along with regular policy reviews, can help maintain compliance. Training employees on evolving privacy standards is equally critical. Many companies are finding success by using compliance management platforms and consulting legal experts to quickly adapt to new requirements and avoid gaps.“Ryan Johnson, chief privacy officer at The Technology Law Group, emphasizes the need for data minimization, model transparency, and robust governance for generative AI”.His advice reflects a broader industry shift from mere checkbox compliance to actively managing data sovereignty and privacy risks.

“Tui Leauanae, solutions architect at Protegrity, advises classifying and governing unstructured data to prevent vulnerabilities”.This is particularly relevant as AI systems increasingly process diverse data types from a variety of sources. To tackle the interconnected challenges of privacy, security, and AI risks, NIST recommends integrating its Privacy Framework 1.1 with the AI Risk Management Framework and Cybersecurity Framework 2.0. This comprehensive approach equips organizations to manage the overlapping risks in today’s complex enterprise environments.